On Thursday Google announced the addition of a set of new user experience metrics to its growing list of ranking factors.

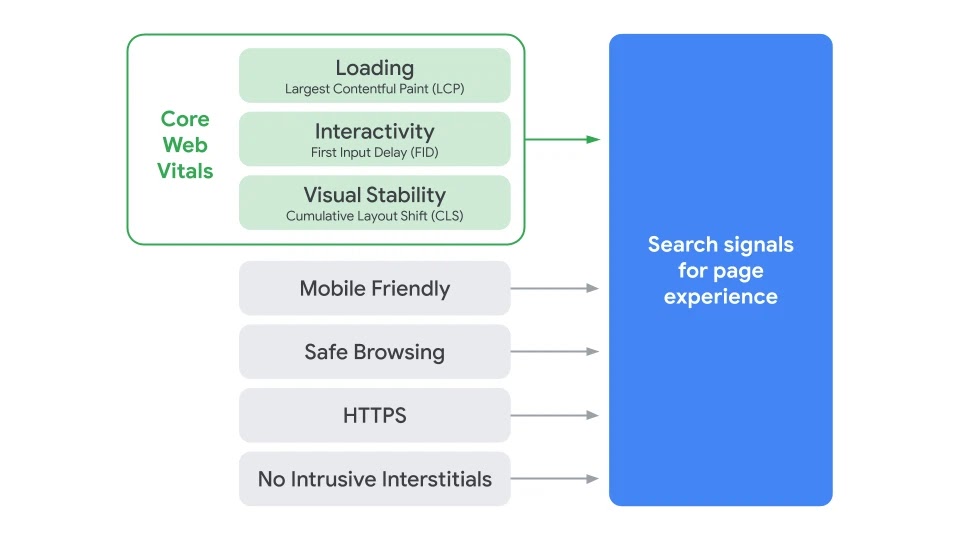

The additions – which Google is referring to as “Page Experience” metrics – will be designed to evaluate how users perceive browsing, loading and interacting with specific webpages, and incorporate criteria measuring:

- Page load times

- Mobile friendliness

- Incorporation of HTTPS

- The presence of intrusive ads or interstitials

- Intrusive moving of page content or page layout

Webmasters should already be familiar with many of these factors, with recent years seeing Google driving home the importance of mobile friendliness, page speed, HTTPS adherence and avoidance of intrusive interstitials.

However, the new Page Experience signal also includes areas from the new “Core Web Vitals” report, recently incorporated into Google’s PageSpeed Insights and Search Console tools.

What are Core Web Vitals?

Core Web Vitals are a trio of metrics designed to evaluate a user’s experience of loading, interaction, and page stability when visiting a web page:

- Largest Contentful Paint (LCP): This measures the perceived loading performance of a page, or the time passed before main page content is visible to users. An LCP time of 2.5 seconds viewed as good, with higher in need of improvement.

- First Input Delay (FID): Measuring interactivity / load responsiveness, or the time it takes for a user to be able to usefully interact with content on the page. An FID of less than 100ms is optimal, with higher scores in need of improvement.

- Cumulative Layout Shift (CLS): Measuring visual stability, or whether the layout of a page moves or changes while a user is trying to interact. Pages should aim for a CLS of less than 0.1 in order to provide a good user experience.

Largest Contentful Paint and First Input Delay will already be recognisable to most webmasters, with Google’s PageSpeed and Lighthouse tools already providing information on these metrics.

However, Cumulative Layout Shift appears to be new, with Google’s John Mueller stating that the CLS metric has been created to gage levels of user “annoyance”. CLS looks at the familiar experience of content shifting as a page loads, which Google illustrate with the below GIF:

What does this change?

Whilst most of the individual metrics within Page Experience are pre-existing ranking factors, the new announcement places them together as one part of an overarching signal:

Google state that they are aiming to provide a more “holistic picture of the quality of a user’s experience on a web page”, by grouping previously separate factors together.

Each factor will be weighted uniquely, although as Google have declined to comment on how this weight will be distributed, it will likely be up to webmasters to determine the importance of each.

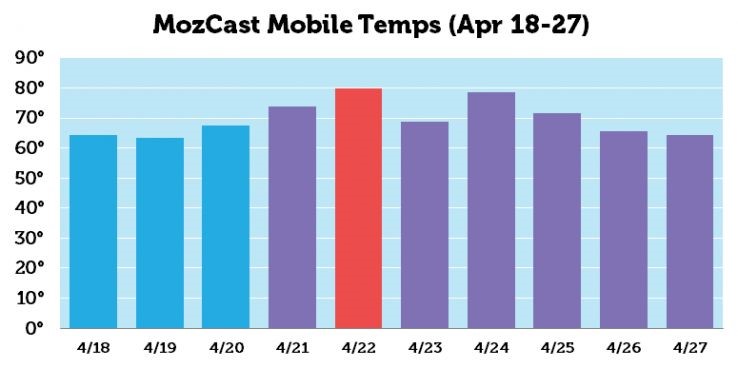

The new signal is also set to bring changes to how mobile top stories are determined, with the adoption of AMP (Accelerated Mobile Pages) no longer a prerequisite for inclusion within this section.

In future, top stories will be based on an evaluation of Page Experience factors, with non-AMP pages able to appear alongside AMP pages.

When will Page Experience roll out?

Google state that changes around Page Experience “will not happen before next year”, and promise to give at least 6 months’ notice before any roll out takes place.

This gives webmasters plenty of time to get ready for the changes, with preparation hopefully made easier through the early incorporation of P.E into tools like Google Search Console, Lighthouse, and PageSpeed insights.

Check out our recent blog posts for the latest news, and if you’re interested in finding out more about what we can do for you, get in touch with us today.